Lies, Damn lies, and Intel's Terrible, Horrible, No-Good, Very Bad October

Hamas rocket fire and a flubbed product launch? Can it get worse? I guess, at least - they aren't selling the fabs (yet)

(The Bizzaro world version of the “Stonks” meme, needs no accreditation)

MORE COMPUTER CHIP NEWS! Woo! Given prior tech-y pieces have garnered some interest, I’ll keep the niche going. I feel like on average, nobody cares about Intel, and that they’ve become ubiquitous - which is a horrible place for a brand to revel in, the shadows - where criticism and attention often aren’t levied. So, if you enjoyed our take on Intel in Haifa, and you were enthralled by our prior writings on the US Empire using semiconductor manufacturing (including EUV) as a shield/cudgel through companies like ASML and TSMC, buckle up, knucklefuck. This is sure to be fascinating for you. We’re even including audio-visual references from leading “Computer Hardware Review Experts”, whatever the fuck that is. YouTube videos of nerds and old screenshots. There.

Onto the news. INTEL has released new products! Rejoice, nerd and shareholder alike! BUY THEM IMMEDIATELY! The new addition to the Intel Processor (TM) family is the 14th “Generation”, if one can call such meager improvements and refinements a generational leap. Enter products designated i5-14600K, i7-14700K and i9-14900K. If that just seems like an incoherent string of characters, don’t worry, in this article I hope to help you understand the Intel product line and segmentation of the consumer range, why that matters to you - the consumer (or shareholder), where Intel is going absolutely wrong to split the baby so finely, how it can be addressed in the future, and how you can avoid their mistakes today (and possibly through the Holiday shopping season and tax time next year). I’ll also be offering my own opinion and consumer advice about available competitors, where they match up, who has the edge, and why you should care.

FULL DISCLOSURE: A little backstory about myself before we begin. I have a resume that spans a number of industries of more than 15 years, including direct/retail sales, IT and consumer tech, though I am not currently under the employ of/nor am I paid or prompted by anyone or given any material or service in kind to write any of the above or below, or anything ever in this publication. I do not take review samples, monies, goods, services or travel/lodging for any product reviews, brand commentaries, or other written or produced materials, and I do not see a time where I will. I will clearly identify if that changes. In the meantime, I retain full editorial control over my productions and writings, and do not give any brand or corporate entity any prior knowledge or prior access - they may view it at time of publishing like anyone else. They do not have any editorial input. Counterspin - Lies, Damn Lies, and Spin! does not, as a matter of courtesy, reach out to mega-corporations for spin. They have their own webpages if you’d like to read their writings. INTEL CORP and all other trademark and license holders mentioned below are the respective owners of the trademarks and copyrights, and I do not pretend to own the rights. I am merely discussing them.

CONFLICT OF INTEREST: I have a familial tie to one of the subjects of this piece. My partner is a contract worker on behalf of INTC 0.00%↑ , and beyond his own first hand experiences working for a subcontractor at one of their facilities, he is unable to provide input and is not cited as a source, nor will I accept any input from him in the making. Not only does he lack the technical and consumer expertise, he is simply not interested in the industry in the manner I am. In effort to keep the distance as vast as possible between his employ and my own opinion, I must make clear that my writings are not a reflection of his direct employer nor the contract holder Intel, nor his. They are mine, and mine alone. They are only a reflection of myself and my perspective. He’s asleep before his 12 hour shift as I write this. My little cart pushing ret runner..

On to the news. As you can see in the above stock ticker (at time of writing), Intel is down nearly a percent - on the day of one of their most important product launches post-COVID funk. That’s a bad omen already, and we haven’t even started with the real bad news.

The Intel Core(R) 14th Generation Family of Processors (1998-2024)

So I did promise that I would help make sense of their product segmentations. Let’s make good on that first, so the rest of it can make a bit more sense. I hope you’ve shopped for a computer in the last 25 years. You do know what a core and a thread are, right? No? Fuck. This is going to get complex.

*sigh*

Let’s go back in time to make it a little easier, to 2001, back when George W. Bush was bopping around to N*Sync while he was destroying Afghanistan. At that point, Intel was making something called the Pentium 4. You might have even had one (or if you were lucky, a few) in your home - in various flavors, up to 3.06ghz - an astonishing speed at the time. Sadly, all good things must end, and even it reached the end of what it could do - by late 2002 Intel engineers were working fastidiously on a stopgap measure that later in 2003 would be introduced the world as “hyperthreading”; a concept where a single CPU core would be split into two ‘threads’, giving the user 2 ‘logical’ (ie: seen by software as) processors, without needing two physical processors - a common situation in years prior. The singular die could itself work on two tasks at once. A breakthrough.

(Your family had money/you were a total nerd if your computer had this badge back in the day - Registered trademarks of Intel Corp,. used w/ attribution)

Snap to the end of 2005. The HT Pentium 4 itself is long in the tooth. It had been a good 6 year run from 2000 to 2006 for Pentium 4. Pentium 4 in its barest form however would be remembered for running slow, hot, and being an inordinate consumer of electricity. The stopgap measure of hyperthreading made these issues worse, though it did at least improve performance. As history would soon tell, the issue was in how the core was cutting up software instructions into steps - another Intel’s 8th wonder of the world that broke the computing instruction pipeline, one that we had grown accustomed to in the late 20th century. It was so bad that, in building what came next, Intel had to go backwards to Pentium III. Wait - don’t go - I promise this story is getting to 2024. Albeit as slowly as an Intel comp- sorry, that was a cheap shot.

Enter Yonah (the basis for Core) - in a fun tie-in back to previous work, designed in Israel at Intel Haifa. 2005-2006. Core/Core2 was a breakthrough architecture, a total rewriting of the x86 skeleton that had underpinned everything from 8086 in the 1980s through Pentium II, III, and IV. Think of Core2 (Core2Duo, Core2Quad, and the ill-fated CoreDuo/CoreSolo) as Pentium III Director’s Cut, where Intel finally got it’s Groove Back.

In fact, Core/Core2 was so good that is caught the attention of a massive system integrator, Apple Computer - itself trying to find a new mojo, shaking off an era of late 90s funk.

(Then-CEO of Apple Computer Steve Jobs taking the ceremonial CoreDuo/CoreSolo Wafer #0 from then-CEO of Intel, Paul Otellini, at MacWorld 2005 - via Youtube - all rights owned by the video creator - NOTE: Don’t be a Steve, never handle bare silicon wafers with bare hands - follow GMP)

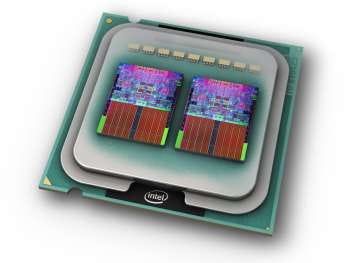

So, what’s the distinction between CoreDuo/CoreSolo and Core2Solo, Duo, and Quad? Put simply, 32 and 64 bit instructions. CoreDuo/CoreSolo were a 32 bit architecture, while Core2 would be Intel’s first foray into AMD_64, or 64-bit computing. The segmentations within were designated by the number of CPU cores and the clock speeds they could reach - solo had a singular core, duo had 2, and quad had 4. Why did I say AMD up there? AMD beat Intel to 64 bit computing, something Intel had dragged its feet on and deemed unnecessary. Oh my, how times change.

(Artist’s rendering of an Intel Core2Quad ‘Yorkfield’ Q6650 package showing die under the Integrated Heat Spreader, via Techgage)

(Die shot rendering of ‘Kentsfield’ Core2Quad, ‘L2 Cache’ is a small amount of nearest-neighbor memory that is quickly accessed by the ‘core’ as necessary - via AnandTech)

So, I hope the visual mediums above are enough to help you understand this (17 year old) product segmentation. I neglected to mention Pentium and Celeron, as these are just cache-reduced single/dual core variants with other weaker components included in the die to reduce cost. These were, and still are, terrible, and not worth mentioning. Frankly in my take this part of the range should be reduced to nil, and in recent times with Intel Processor N100/N305, this appears to be happening.

So.. What does any of that have to do with 2024’s product line, exactly?

As Core2 aged, a new product line was introduced - simply ‘Core’, in 2009. This is the birth of the modern nomenclature, i3/i5/i7 (with i9 to come later), and the modern segmentations - sort of. In terms of architecture, it is largely identical to Core2 with some changes to interfaces and layouts for efficiency. This “tick-tock-tock-tock-tock-tock-tock” method of iterative was successful for Intel for many generations. This is where your Intel 7th Generation i7-7700K and so on come from. Who still doesn’t remember their 4770K? Anyways - Count tocks from the tick and that’s where you are. As of today, we are 13 tocks from the original ‘tick’ of Core (2009).

(ED: The final letter of the Intel product name is crucial with K being a designator for core multiplier unlocked/’can be overclocked’, other letters mean other things; chips designed KF are w/o integrated graphics chiplet but are otherwise materially identical to K SKUs, these K/KF chips are highly desired by vidya gamerz, enthusiasts and consummate professionals who enjoy tinkering or need to get every last MHz of performance out of the CPU - consider the K/KF family somewhere between fancy toys and high end workstation brains which are otherwise classified as Xeon. Hope this helps.)

I should be fair, in that time we have had some impressive and important changes to the family, ignoring what came prior to 2020 because we simply don’t have time to remind the world of what Intel used to be able to do - 9th tock (10th Generation, 2019-20) introduced LGA1200, a new pinout arrangement and motherboard socket/packaging combo, along with some other welcome changes. 10th tock (11th Generation, 20-21) introduced minor revisions, including a new generation of integrated graphics processing tile, and more PCIe interconnectivity. 11th tock (12th Generation, 21-22) saw core clock increases and the introduction of P-Cores; aka the regular above discussed cores, and E-Cores: single thread, Netbook-tier low-clock speed efficiency cores - plus the required support code/hardware, along with PCI-e Gen 5 - and also introduced the “Intel 7” node/10nm SuperFIN lithography and new LGA1700 packaging; 12th tock (13th Generation) was mostly a hold-over with increased clock speeds and some additional E-cores on some models. So what did 13th tock (14th generation) introduce, for the K SKU range? Let’s look at Intel’s own ARK tool to compare the range. We’re going to use the middle-of-the-pack i7-1*700K as our example.

Not much changed. In fact it’s hard to see exactly what changed. I see some increased clock speeds but it appears nothing else has changed. As far as holdovers go, I am thoroughly surprised at just how much was held over - wow, four additional efficiency cores and a 100MHz overall clock speed bump, how generous! As far as I can see it, Intel did not appear to have anything ready for launch and instead simply relaunched a product already on sale, under a different SKU, and pretended it had reinvented the hwheel.

Here’s just some headline specs in photos for those of you who didn’t click:

You can keep going down the page but it is, near as makes little to no difference, the damn same thing. I didn’t include 9th tock (Gen 10) in the screenshots as it is just too old to compete. It is included in the above link though. If Intel considers this 13th tock a “generation”, it is out to lunch, and that is a dangerous place to be right now.

INVESTORS FLEEING LIKE RATS FROM A PERFECTLY ORDINARY, IF A LITTLE SLOW SHIP FOUNDRY

Okay okay joke headlines aside, Intel has been facing a battering in the past five years, stemming from the loss of major client Apple; yes, the people I showed above. They left Intel for greener pastures, making their own computer CPU designs and sending them off to TSMC along with the Apple Watch and AirPods designs, to be printed by magical Taiwanese elves in Hsinchu Science Park. Vertical integration wins again.

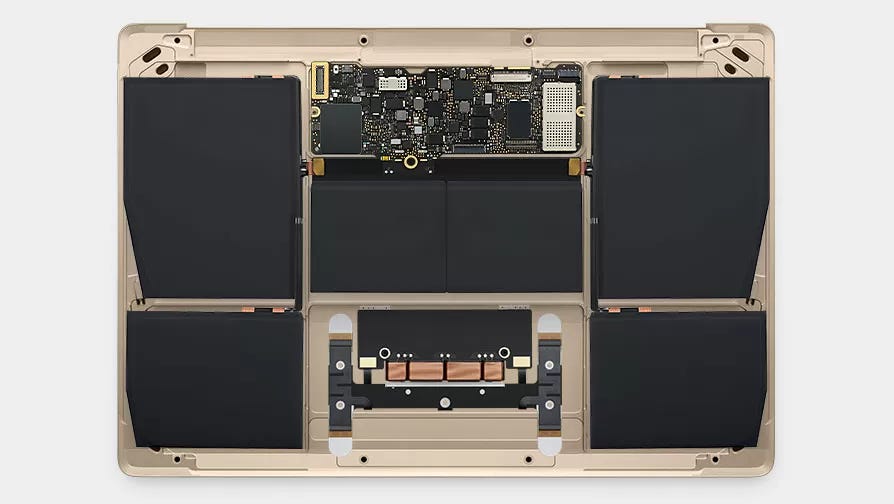

Intel has still not been able to grasp why it’s prior lover (lifestyle brand, as current Intel CEO Gelsinger refers to them as) left them. AAPL 0.00%↑ has been, since the days of the Oregon Trail on your grandma’s IIGS, on a quest to find the most efficient CPU design, balancing performance per watt. It started this whole affair with Motorola, which lead them to form a partnership with Motorola and IBM, PowerPC. Once that ran its course, it bought from Intel - but it was never satisfied. Though quarrels between companies are best kept behind closed doors, it is obvious to even laymen that Apple initiated the breakup because of the 2015 MacBook Retina “Core M”.

Why that 2015 MacBook, specifically? If anyone knows the story of Olden Apple, Steve obsessed over thin, fanless (silent) designs. The 2015 MacBook Retina was one of those fanless/silent designs - probably one of the last of his era, and it used an Intel processor - one that should, according to Intel, have met Apple’s requirements just fine, at just 3.5 watts TDP. The chips used were ultra-low-power Core M mobile chips that should have by anyone’s understanding operated in a very cool, low power state. A match made in heaven. Right?

(What’s under the fancy panel of a little 2015 MacBook Retina, no fans - just a small heat sink - Via TechSpot)

If anyone who owned a 2015 MacBook Retina Core M can chime in down in the comments, I’d like to know if your thigh skin ever grew back or if you did need that graft. Intel, colloquially, has been making blast furnaces since the 1980s - this however was an egregious moment of under-delivering. Not only did the chips offer far more power draw than Apple was interested in, it produced so much heat as to be a genuine concern to users, who reported little MacBooks overheating, shutting down, and simply running slowly, as if they were always at 100C - which, testing would later prove, they were.

Was Apple at fault? Not as such, they and their vendor Foxconn used components that should have dissipated the heat without issue. The problem is that the Intel chip was drinking electricity and producing heat beyond what Intel had described to Apple. This (and I say this in speculation) I believe was a Brewster’s Millions challenge from Apple to Intel. They designed the laptop just fine, but the chip always ran too hot and was too power-hungry.

Thus, their desire to leave the marriage was set in Dieter Rams Industrial Design marble - wait, sorry, stainless steel. Apple had more than two decades experience with in-house designing silicon at this point, and had been designing their entire ecosystem around the Darwin kernel, which worked perfectly on ARM on their then ubiquitous iPhones and iPads. The challenge was lost, Intel could not measure up, and like Motorola/IBM it would be resigned to the “Vintage Hardware” list. This was obviously a warning shot across the bow that Intel did not mistake - and so they spent the next few years tinkering, tweaking, partnering with their now-ex’s suppliers (like TSMC and ASML) in order to “bring things up to date”.

Unfortunately for them…

A wild DR. LISA SU appeared!

Intel isn’t just facing stiff competition from the lifestyle brand, it is having its core business model devoured; skeleton and sinew alike. By who? Apple can’t even crack 20% of the portables segment on a great day, but they’re happy being niche. So who’s the threat?

AMD 0.00%↑ . Of course. I’ve waited this long to even mention them, aside from the history lesson. That’s because AMD, from 2008-2015, wasn’t really doing that hot. It was where Intel is today - making chips that ran hot, underperformed, were expensive, lacked software support, it had a bad hand in spades. Anyone who owned an AMD FX processor (includes PS4 owners) can attest that Bulldozer and generations nearby were, to be polite, awful. The cheap variants were prone to lag in a way that was reminiscent of the Pentium 4 due to a lack of cache, and the expensive ones ran too hot to be of any use.

So what did AMD do? Well, firstly, it did what Intel already did, fired its then CEO Rory Read - replacing him with Dr. Lisa Su, a doctorate of electrical engineering/semiconductors, who then (I presume) waved her magic wand and conjured a competent team to begin designing a clean-sheet ethos/design for their next project, Ryzen.

I don’t think I need to say much at this point. In 2017, as Intel was entering its “rut” era, AMD was nose-to-grindstone eeking every ounce of performance from a single watt, iterating through the years on it - sometimes besting Intel, offering features that Intel could no longer support such as AVX-512, itself a work-around to a stupid problem that Intel also -ahem- drug its feet about.

Ryzen and platform high-end desktop twin “Threadripper" / “Ryzen Pro” have been a smash commercial success, with Ryzen offering special editions like the 5800X3D/7800X3D, offering HBM (high-bandwidth memory) on-package, acting as an extremely large and extremely fast cache - something Intel has yet to offer in the consumer space, reserving such things for its Xeon chips, which are locked behind enterprise/large business pricing. AMD competes with Intel in this space as well, and has been growing market share in the small/med/enterprise corporate IT space.

AMD didn’t just eat Intel’s lunch on computer processors in the consumer and corporate, though that would be enough to make me faint if I were an INTC shareholder. AMD shockingly was able to do all of this in a fabless state - that is, AMD does not own and operate its own semiconductor fabrication facilities and instead prefers to partner with others such as TSMC and GlobalFoundries. AMD does not have the financial overhead of Intel, as it does not need such laborers and workers, such equipment, such land, such energy and water to produce. It leaves that to a vendor. In doing so, it grants it more flexibility in design and engineering - whereas Intel has to split its focus between the two, and in the end when it is caught with its pants down, all it can do is increase the power consumption of their product from last year, and perhaps get another 2-4% of performance from it.

Now, though that plays to AMD’s favor, and though Intel is the one in the awkward position of “too big to fail” right now, it really is in a pretty good position to compete - just not this cycle, and not this year. Allow me to explain.

The good news at the end of the diverse product cycle

This isn’t to say Intel is down and out forever, quite the contrary. Intel itself has been reorganizing the company, shedding some divisions such as SSDs, while entering others (ARC Graphics Cards). It is clearly in a transformative period, and isn’t afraid to try new things, or take criticism on the chin. It is working with TSMC. It has ASML offices adjacent to its fabs, and ASML equipment in them. It hires top talent. It pays employees top dollar.

BUT.. It is a very precarious place to be. The global computer market has been in a slump since stay-at-home COVID orders were ended and the emergency was un-declared. People simply are not doing as much work-from-home and telecommuting as they were a few years prior. On top of this, playing against all industry players but Intel in specific, is that computers and consumer tech goodies are now reaching a point of competence where they are durable and robust enough to last 7-10 years. People simply are not buying computers (or phones, or tablets, or TVs) every year.

Businesses do, though in economic hardships computers/tech are often the first thing that gets skipped in the pecking order of replacement. Computers live extra long lives in offices - it is not uncommon to see businesses, including Intel itself, running computers from 20 years ago. So it is left to iterate, or spend and write a new clean sheet design.

Intel has something playing to its advantage many forget about - it entered the foundry-as-a-service space, competing directly WITH TSMC and GlobalFoundries for orders from companies LIKE Apple and AMD. So even if AMD and Apple are not buying Intel processors and do not have Intel inside, they do still have Intel inside - they were made inside the same Intel factory that the ARC graphics card, the AX wifi card and the 14700K were made. This includes, worth mentioning, MIL/IND orders - Intel is open to producing materials for USDOD, and other ‘friendly’ nations we have yet to sanction.

For what it’s worth, Intel has had a silicon engineering genius from AMD in its wings until March this year, Raja Koduri - who left to form a generative AI startup. Hired in 2017, he was instrumental in helping bring the company to a chiplet/tile design, and forcing their hand in taking up the gambit of EUV and moving away from 22nm lithography. Raja is hard to describe, friendly, genuine, honest, straightforward - but he isn’t too far from the ordinary. Not what one would see as a “visionary genius”, yet he was part of the brains behind Ryzen. Somehow, not even Intel could make his talents really work, or perhaps they became so muted behind bad marketing that they were lost in translation.

It is sad to see Intel squander such talent, and let it slip through its fingers so easily. Raja is hard to replace in his capacity, though Intel seems to be coping just fine with his absence - if you include iterative nothing as coping. I have no doubt that Intel has competent engineers somewhere - clearly not in Santa Clara or at Ronler Acres. I have no doubt they have competent line workers and machinists and other tradesmen/women giving it their all every day, many of them in my town, some of whom I consider neighbor, friend and even lover. That does not excuse the failures of their marketing department, and the shortcomings of their architecture.

With Tock 14 (15th Generation), it needs to be a whole new ‘tick’. Intel has something up its sleeve for that, which it has been teasing for some time - x86s. It’s another stopgap measure in the place where a clean-sheet design should be, but Intel clearly prefers iterative rather than transformative.

I’m going to end this write-up on a very simple point. Intel’s product segmentation and “generations” as they stand on store shelves today are stagnant and don’t make any sense to the consumer. There is not enough difference and daylight between products/generations to justify their existence. As Steve Burke of GamersNexus described 10th Tock (11th Generation), 13th tock (14th Generation) is also in fact a waste of sand. They are a waste of the energy of the reticle runner pushing the cart with the mask, they are a waste of the electricity and water used in the making. They are a waste of time, effort, energy, resources, and money. It’s a waste of a paper box and ink that it comes in.

I don’t think Intel’s shareholders want to hear this, but they need to hear it - so maybe, perhaps, on the next investor call, they can voice their displeasure with the products and market segmentation. In my small part, I’ll be keeping my 11th tock (12th Gen, N305) until Gracemont gets replaced. I want to see an actual generational shift, rather than a mild tweak around the edges.

To close out, here’s a full product review by someone who is far funnier, far smarter and far more thorough than I am.

There was some book about a computer company of the eighties. Soul of a New machine, Kidder. What you are writing about reminds me of that. That is my one connection to this information.

Oddly fascinating. I think that’s testament to your writing. Computer chipzzzzzzzzzzzz are not usually capable of raising even an eyelash on me. But hey, always a first.

I did chuckle internally to my caption for the Jobs/intel guy photo…

Weird Space Alien presents gift to Earth Astronaut…..